28. Jahrestagung der Deutschen Gesellschaft für Audiologie e. V.

28. Jahrestagung der Deutschen Gesellschaft für Audiologie e. V.

Dysphagia detection using near-ear sensors and machine learning

Text

Research question: Swallowing disorders affect approximately 600 million individuals globally and are a major risk factor for aspiration pneumonia. Current diagnostic methods, including FEES (Fiberoptic Endoscopic Evaluation of Swallowing) and VFSS (Videofluoroscopic Swallowing Study), are highly accurate but invasive, require specialized personnel, and are unsuitable for repeated assessments. This research investigates whether Dysphagia can be detected accurately and robustly using non-invasive near-ear sensor signals combined with deep learning methods, with performance comparable to clinical gold-standard assessments. The ultimate goal is to develop an accessible, non-invasive dysphagia screening and monitoring tool suitable for repeated use in clinical and home environments.

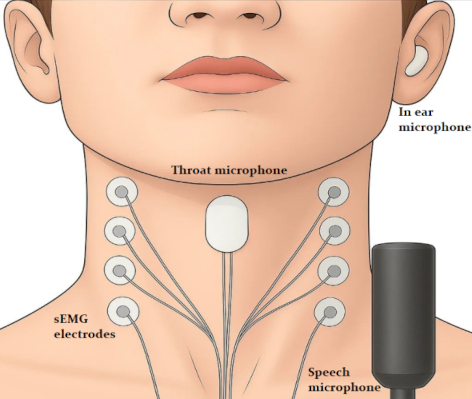

Method: Our system integrates four sensors: In-ear microphone which will collect the intra-aural click sounds [1], which is a temporal indicator of swallowing; surface electromyography (sEMG) to detect the swallowing muscle activation patterns [2] and laryngeal elevation curve; piezoelectric microphone to detect cervical swallowing sounds; and a speech microphone. The study includes 30 healthy controls and 30 participants diagnosed with dysphagia. They will complete two protocols: swallowing tasks with different viscosities (water, saliva, and jelly), followed by speech tasks (conversational speech, sustained vowels, and diadochokinetic exercises). The features would inlcude the interval between the successive click sounds during swallowing, the interval between the point of intiation of swallowing and the point at which bolus reaches the hypopharynx, the muscle activation patterns of all the swallowing muscles, Laryngeal activation duration, and some speech biomarkers. The hypothesis is that there is a significant difference between all the features mentioned between the two groups. After the data collection, next step would be to develop and test a multimodal deep learning algorithm that can take the patient data and classify the person as Dysphagic/Healthy.

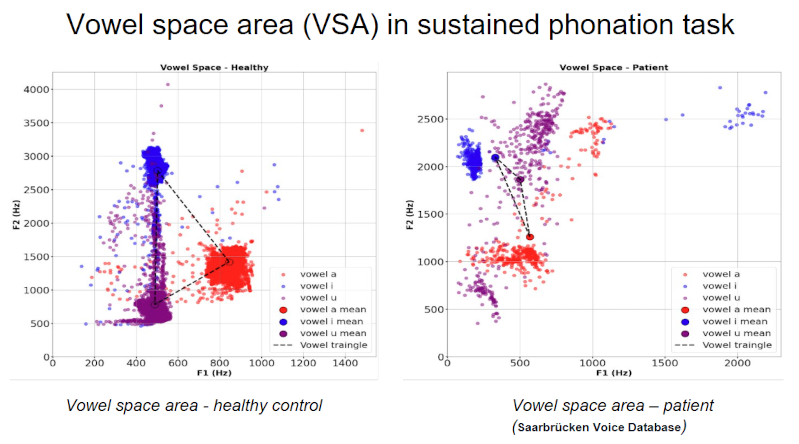

Results: Preliminary data for the sustained phonation task showed observable differences in vowel space area (VSA) between healthy individuals and those with swallowing problems. These findings are consistent with prior studies which show a significant difference in speech features for both the groups. Currently, I am also looking into detecting the intra-aural click sounds and the cervical swallowing sounds using the Hearpiece and Laryngeal contact microphone.

Conclusion: Our multi-sensor approach is expected to demonstrate potential for a non-invasive and accurate dysphagia detection system. This method could complement current gold standards, enabling broader screening and early intervention for at-risk populations.

Figure 1 [Fig. 1]

Figure 2 [Fig. 2]

Literatur

[1] Haji T, Yamaguchi Y. Intra-aural swallowing sound analysis with simultaneous videofluoroscopy and cervical swallowing sound recording. Auris Nasus Larynx. 2025 Feb;52(1):20-26. DOI: 10.1016/j.anl.2024.11.003[2] Ko JY, Kim H, Jang J, Lee JC, Ryu JS. Electromyographic activation patterns during swallowing in older adults. Sci Rep. 2021 Mar 11;11(1):5795. DOI: 10.1038/s41598-021-84972-6